Wouldn’t it be great if analysts, testers and developers spoke the same language? When I develop something great the tester says it is wrong and the analyst says it is not what he meant.

With SpecFlow the analyst can write what a tester can test and a developer can implement. They all speak the same language.

Setup

First install the SpecFlow extension and the NuGet Package Manager. Now you are ready to start a new solution. I’ll use my Transportation demo as example.

To add the references to the Test Project in your solution you run the following NuGet command

PM> Install-Package SpecFlow

You’re almost done setting up. SpecFlow must know what unit test framework dialect to use, the default is nUnit but I’m using MSTest. Set this in the App.config in the specFlow section.

<unitTestProvider name="MsTest"/>

Features and Steps

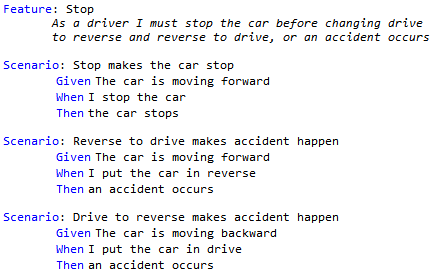

Add a new file to the unit test project. Select the SpecFlow Feature File template. This is written in the language Gherkin. SpecFlow will bind the different parts to code written in a SpecFlow Step Definition.

Stop feature in Gherkin with 3 test scenarios

Feature: Stop As a driver I must stop the car before changing drive to reverse and reverse to drive, or an accident occurs Scenario: Stop makes the car stop Given The car is moving forward When I stop the car Then the car stops Scenario: Reverse to drive makes accident happen Given The car is moving forward When I put the car in reverse Then an accident occurs Scenario: Drive to reverse makes accident happen Given The car is moving backward When I put the car in drive Then an accident occurs

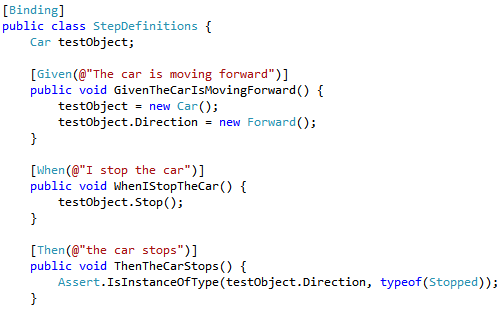

The specflow extension provides a command to generate the SpecFlow Step Definition. After building the Test Explorer will show your tests and you can run them. Since you have not implemented the logic for the steps everything is inconclusive. Implementation can be done with the Refactor operations as with my TDD demo. Generate the Car and it’s operations and properties. Now follow the TDD principles of Add Test, Pass Test and Refactor.

Part of the Step Definition bound to the Gherkin from above

[Binding]

public class StepDefinitions {

Car testObject;

[Given(@"The car is moving forward")]

public void GivenTheCarIsMovingForward() {

testObject = new Car();

testObject.Direction = new Forward();

}

[When(@"I stop the car")]

public void WhenIStopTheCar() {

testObject.Stop();

}

[Then(@"the car stops")]

public void ThenTheCarStops() {

Assert.IsInstanceOfType(testObject.Direction,

typeof(Stopped));

}

}

First impressions

- There is no ExpectedException. But this is testing the behavior. When the car is in an accident, you should be able to notice it from a change in behavior.

- Using this intermediate language the gap between Analyst, Developer and Tester just got smaller.

Further reading: