We have Azure Devops Server to manage our solutions. The version we currently run comes with yaml build pipelines and classic release pipelines. It is “easy” to commit and push to git from a yaml pipeline with the repository resource definition (details on microsoft learn) You need to do some extra lifting in the classic pipeline.

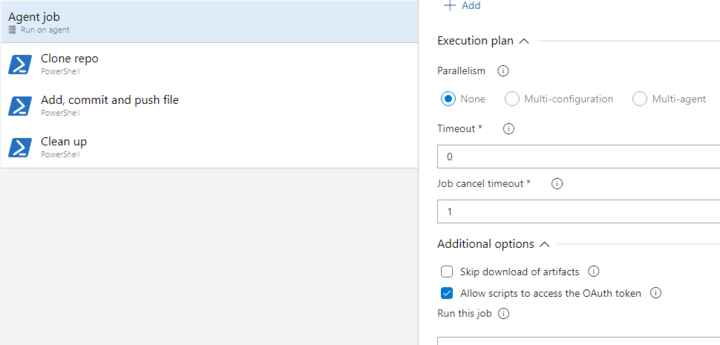

First enable the “Allow scripts to access the OAuth token” – this makes the $env:SYSTEM_ACCESSTOKEN available to scripts. It is located on the Agent job part inside the Additional options.

Now you can clone the repo with a task like powershell. Make sure to set the working directory.

Write-Host 'Clone repo'

# create base64 encoded version of the access token

$B64Pat = [Convert]::ToBase64String([System.Text.Encoding]::UTF8.GetBytes("`:$env:SYSTEM_ACCESSTOKEN"))

# git with extra header

git -c http.extraHeader="Authorization: Basic $B64Pat" clone https://AZURE_DEVOPS_URL/_git/REPOSITORY

# move into the repository folder

push-location REPOSITORY

git checkout main

Make your change and commit + push with a task. Make sure to set the same working directory – like the folder with the artefact from the build.

Write-Host 'Add, commit and push file'

$B64Pat = [Convert]::ToBase64String([System.Text.Encoding]::UTF8.GetBytes("`:$env:SYSTEM_ACCESSTOKEN"))

# move into the repository folder

push-location REPOSITORY

# create / update file

Copy-Item -Path ../deployment.yaml -Destination overlays/dev/deployment.yaml -Force

# stage file

git add overlays/dev/deployment.yaml

# provide use info

git config user.email "info@erictummers.com"

git config user.name "build agent"

# commit using the build number

git commit -m "updated deployment to $(Build.BuildNumber)"

# pull and push

git -c http.extraHeader="Authorization: Basic $B64Pat" pull

git -c http.extraHeader="Authorization: Basic $B64Pat" push

In the clean up we remove the repository as a good practice. Again make sure to set the same working directory.

Write-Host 'Clean up'

Remove-Item REPOSITORY -Recurse -Force

Now the file is changed in git from a classic pipeline.