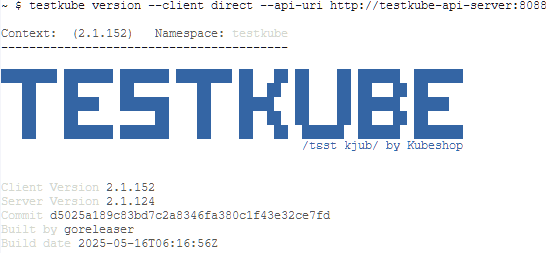

We moved our workloads to Kubernetes and now want to run our tests in the cluster. In this series I describe our journey with Testkube. This setup works for us, your milage may vary. You can view all posts in this series by filtering on tag testkube-journey

TestWorkflow

Our app under test uses the keycloak api to provide self-service to developers that want to use oAuth. Your app will most likely use some sort of api too.

What happens in a test is defined with a TestWorkflow. It describes the steps to be performed. Below are the steps we use:

- clean the environment by restarting keycloak

- wait for keycloak to be up-and-running again using curl to request a page

- start the tests in our e2etests container

The yaml is shown below (please read on why this might fail):

apiVersion: testworkflows.testkube.io/v1

kind: TestWorkflow

metadata:

name: playwright-selfservice-testworkflow

spec:

steps:

- name: clean-environment

container:

image: registry.user-sb01.k8s.cbsp.nl/docker.io/bitnami/kubectl

shell: 'kubectl scale deployment/keycloak -n selfservice --replicas=0'

- name: wait-for-keycloak

container:

image: internal-registry-address/docker.io/curlimages/curl

shell: 'while [ $(curl -ksw "%{http_code}" "http://keycloak.selfservice.svc/realms/master" -o /dev/null) -ne 200 ]; do sleep 5; echo "Waiting for keycloak..."; done'

- name: playwright-tests

container:

image: internal-registry-address/company/e2etests

shell: 'dotnet test /src --no-build'

Security context

TLDR: add securityContext and workingDir to the TestWorkflow to prevent private registry certificate errors during run.

Running this from the testkube-cli was a bit frustrating. An error showed it could not verify the certificate of the internal-registry-address?

failed to process test workflow: resolving image error: internal-registry-address/docker.io/curlimages/curl: inspecting image: 'internal-registry-address/docker.io/curlimages/curl' at '' registry: fetching 'internal-registry-address/docker.io/curlimages/curl' image from '' registry: reading image "internal-registry-address/docker.io/curlimages/curl": Get "https://internal-registry-address/v2/": tls: failed to verify certificate: x509: certificate signed by unknown authority

Turns out this is documented here: https://docs.testkube.io/articles/test-workflows-high-level-architecture#private-container-registries The issue is that Testkube will check the securityContext and WorkingDir from the metadata that is in the registry. To prevent this call to the registry we must provide the requested information in the TestWorkflow. Below we provide the defaults to all the steps in the TestWorkFlow.

apiVersion: testworkflows.testkube.io/v1

kind: TestWorkflow

metadata:

name: playwright-selfservice-testworkflow

spec:

pod:

securityContext:

runAsUser: 1001

runAsGroup: 1001

container:

workingDir: /data

steps:

- name: clean-environment

container:

image: registry.user-sb01.k8s.cbsp.nl/docker.io/bitnami/kubectl

shell: '.. removed for clarity ...'

- name: wait-for-keycloak

container:

image: internal-registry-address/docker.io/curlimages/curl

shell: '.. removed for clarity ...'

- name: playwright-tests

container:

image: internal-registry-address/company/e2etests

shell: '.. removed for clarity ...'

For some steps we needed other rights. This can be overridden in the step by adding the securityContext below the container config. Here is an example for running dotnet test with root access.

apiVersion: testworkflows.testkube.io/v1

kind: TestWorkflow

metadata:

name: playwright-selfservice-testworkflow

spec:

pod:

securityContext:

runAsUser: 1001

runAsGroup: 1001

container:

workingDir: /data

steps:

- name: clean-environment

container:

image: registry.user-sb01.k8s.cbsp.nl/docker.io/bitnami/kubectl

shell: '.. removed for clarity ...'

- name: wait-for-keycloak

container:

image: internal-registry-address/docker.io/curlimages/curl

shell: '.. removed for clarity ...'

- name: playwright-tests

container:

image: internal-registry-address/company/e2etests

securityContext:

runAsUser: 0

runAsGroup: 0

shell: '.. removed for clarity ...'

Now the first step starts without the error, but fails because it is not authorised on the kubernetes resources. For this we will add a service account.

Serviceaccount name

TLDR: add a serviceAccount resource and add the serviceAccountName to the TestWorkflow to manage kubernetes resources from your TestWorkflow.

To allow the serviceAccount to scale the keycloak deployment (so that gitops will scale it back up) we must provide the Role and RoleBinding. You can find excellent documentation here: https://kubernetes.io/docs/reference/access-authn-authz/rbac/ We’ve used the Role over the ClusterRole so that the rights are namespaced.

The complete script now looks like this

apiVersion: testworkflows.testkube.io/v1

kind: TestWorkflow

metadata:

name: playwright-selfservice-testworkflow

spec:

pod:

securityContext:

runAsUser: 1001

runAsGroup: 1001

serviceAccountName: deployment-restart-account

container:

workingDir: /data

steps:

- name: clean-environment

container:

image: registry.user-sb01.k8s.cbsp.nl/docker.io/bitnami/kubectl

shell: 'kubectl scale deployment/keycloak -n selfservice --replicas=0'

- name: wait-for-keycloak

container:

image: internal-registry-address/docker.io/curlimages/curl

shell: 'while [ $(curl -ksw "%{http_code}" "http://keycloak.selfservice.svc/realms/master" -o /dev/null) -ne 200 ]; do sleep 5; echo "Waiting for keycloak..."; done'

- name: playwright-tests

container:

image: internal-registry-address/company/e2etests

securityContext:

runAsUser: 0

runAsGroup: 0

shell: 'dotnet test /src --no-build'

Running this with the testkube-cli (see Testkube journey – where we start) will show something like this:

As you can see all tests Passed. But what to do when some tests Failed? For this you can save artifacts. More about that in a future post.